📝Editor’s Note

AI chatbots may seem neutral, but they are shaped by the companies that build them. Unlike search engines that show links, chatbots create direct answers, and small hidden choices can influence what people see and believe. This article explains why that matters and why transparency is important.

🔎 Why This Matters

- Chatbots do not just show information. They decide how it is explained

- Hidden systems inside AI can shape or filter answers without users knowing

- If a few companies control AI, they can influence public opinion at scale

📘 Simple Guide To Key Terms

- Algorithm

Rules a computer follows to decide what to show or say - Training Data

The information used to teach the AI - Reinforcement Learning

A process where answers are reviewed so the AI improves - System Prompt

Hidden instructions that guide how the chatbot responds - Safety Filters

Controls that block or change certain questions or answers - RAG

When a chatbot pulls in outside information before answering

By Marc Faddoul, Project Syndicate | March 17, 2026

Liberal democracies are setting the stage for techno-authoritarian drift by giving private companies centralized, unaccountable power over AI infrastructure. Given the far-reaching social and political harms associated with unaccountable social-media platforms, shouldn't we know better?

PARIS – Algorithms are not value-neutral. Yet for over a decade now, we have allowed Big Tech to deploy them as the gatekeepers to our information ecosystem, without demanding transparency or accountability in return. The consequences have ranged from the amplification of polarizing and sensationalist content to veiled personalized advertising, the proliferation of monopolistic behaviors, and forms of influence over public discourse that are antithetical to democratic deliberation.

Even though we had to learn the hard way what happens when critical information infrastructure is handed over to corporate interests without oversight, we are now repeating the same mistake with AI chatbots – and the stakes could be far greater. Chatbots do not simply curate existing information; they generate and frame it. Facebook and Google decided which news articles to show you, whereas tools like ChatGPT, Claude, and Gemini synthesize that information into authoritative-sounding answers.

This distinction matters, because the shift from curator to editor is making undue influence even less visible and more pernicious. We are once again ceding unprecedented power over the information infrastructure of the future to private corporations, without even demanding independent oversight. The most pressing threat is not that these AI systems could go rogue, but that a handful of self-interested parties are quickly becoming the information gatekeepers for a large and growing share of the population.

The current chatbots are not simply large language models (LLMs). Rather, they rest on several opaque algorithmic layers that factor into a model’s development and deployment, and each can be an entry point for platforms or other parties to shape information according to their interests.

There are at least five layers to this “algorithmic influence stack.” The first is training-data curation. In determining which data is included or excluded during training, platforms make opaque decisions about sources, how to weigh different perspectives, and what content to filter out. These choices then shape the model’s worldview. For example, in October 2025, Elon Musk launched Grokipedia to provide training data for his Grok chatbot. A corporate-controlled encyclopedia, its purpose is to provide an “anti-woke” alternative to Wikipedia and its community-governance model, which has long served as a widely trusted source of information on the internet.

The second layer is reinforcement learning through human and AI feedback, the process that transformed LLMs from unpredictable text generators into usable “assistants.” During this “post-training” stage of a model’s development, human reviewers rate outputs to guide the system toward desired behaviors, like helpfulness or politeness. For now, these human evaluations remain a major, largely invisible, part of the AI industry. But they are increasingly being replaced by specialized AI “teachers” that are supposed to align the core model with predefined principles that have been encoded in a “constitution.”

The third layer is web search. When chatbots search online or access digital databases, retrieval-augmented-generation (RAG) systems determine which pieces of information to feed into the model’s response. This function mirrors that of traditional search engines, which prioritize certain sources over others. And as with search engines, the introduction of advertisements in chatbot responses – which ChatGPT has announced for 2026 – will raise additional concerns about objectivity.

The fourth layer comprises system prompts. Since these kick in when a chatbot is generating an answer, they allow platforms to alter a chatbot’s behavior without retraining it. For example, because Grok’s system prompt was made public last year, we know that it includes directives such as “do not shy away from making claims which are politically incorrect.” (ChatGPT, Claude, and Gemini also use system prompts, but these remain secret.)

The final layer is safety filters. Before a chatbot query reaches the model, input filters determine whether it is “acceptable.” Similarly, after the model generates a response, output filters can modify, censor, or sanitize content before you see it. While platforms have legitimate reasons to block certain queries (like those seeking instructions on how to make a bomb), the fact that these filters are opaque leaves open questions. Model developers could create the infrastructure for systematic censorship, and we would not know it. Chinese chatbots’ “safety” filters censor all references to the Tiananmen Square massacre.

Political and corporate interests are already shaping this algorithmic influence stack, just as chatbots are being deployed on a global scale. Following Donald Trump’s second inauguration, Apple updated its AI training instructions to avoid labeling MAGA supporters as “radical” or “extreme.” Last summer, Reuters discovered that Meta had updated its internal AI guidelines to loosen safeguards preventing its chatbots from making racist statements or engaging in “flirty” behavior with minors, among other things. And last May, Grok began amplifying unsubstantiated and out-of-context claims of “white genocide” in South Africa (Musk himself is a white South African). While the company blamed “unauthorized modifications,” such “bugs” are common, and they all seem to be ideologically consistent with Musk’s own views.

Political manipulation through chatbots has already proven to be effective. A 2025 Nature study showed that chatbots trained to argue for a specific candidate could sway moderate and undecided voters (the cohorts that decide most elections) with remarkable ease.

Unlike authoritarian systems that exert explicit control over information, democracies depend on a plurality of sources and transparent, accountable information ecosystems. To allow centralized, unaccountable power over AI infrastructure is to invite techno-authoritarian drift, because it is easy to see how each layer of the algorithmic influence stack can be instrumentalized to amplify or suppress certain views without the need for overt censorship.

Last December, the European Commission fined X €120 million ($138 million) for “breaching its transparency obligations under the Digital Services Act.” Predictably, X and its defenders framed the move as an attack on free speech. But transparency is central to the defense of free expression. Without it, we cannot know who is being censored or what influences are being brought to bear on the media we all consume.

The rise of social media taught us what happens when accountability lags behind adoption. We cannot afford to repeat the same mistakes with systems that hold even greater power over public knowledge.

Marc Faddoul is Director and Co-Founder of AI Forensics.

Copyright Project Syndicate

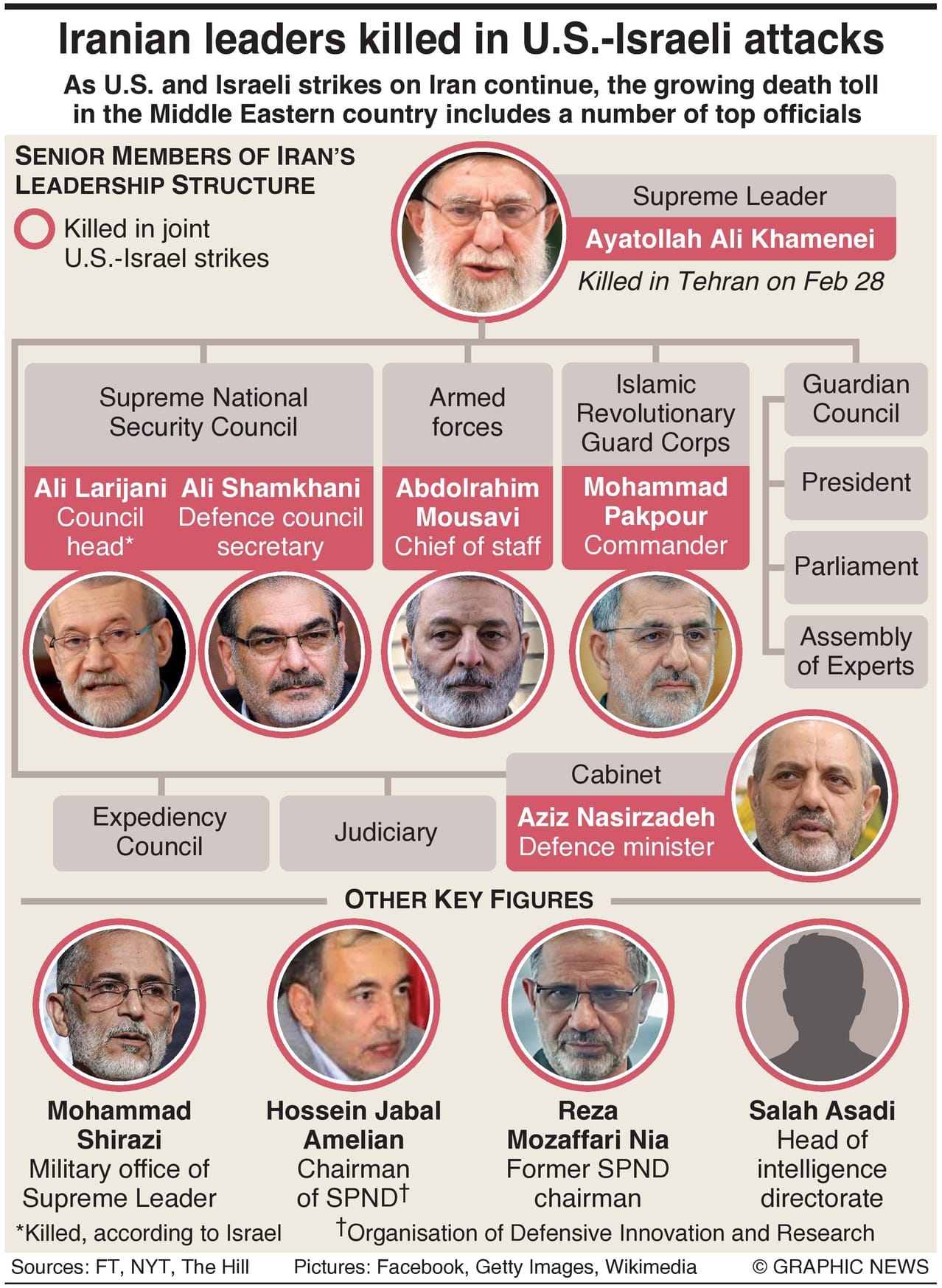

Who Are Iran’s Senior Figures Killed In U.S.-Israeli Attacks?

Israel says it has killed Iran’s national security chief, Ali Larijani, in overnight strikes – a claim that, if confirmed, would make him the most senior Iranian figure to die in the war since Ali Khamenei was killed on its opening day.

Israel's Defense Minister , Israel Katz, said on Tuesday that Ali Larijani had been killed in an Israeli strike, in a claim that has not yet been confirmed by Iranian authorities.

Larijani was last seen in public on Friday during a Quds Day march in Tehran. Officials in Iran have not confirmed whether he has been killed or injured, leaving uncertainty over his fate.

If confirmed, his death would represent a significant blow to the leadership of the Islamic Republic. In recent decades, Larijani had emerged as a central figure in Iran, particularly following the killing of Ali Khamenei.

Despite his prominence within Iran’s power structure, Larijani was often regarded as a pragmatic figure. While he adopted increasingly forceful rhetoric in recent weeks, he had long been viewed by observers as a flexible operator – someone seen as capable of negotiation and engagement.

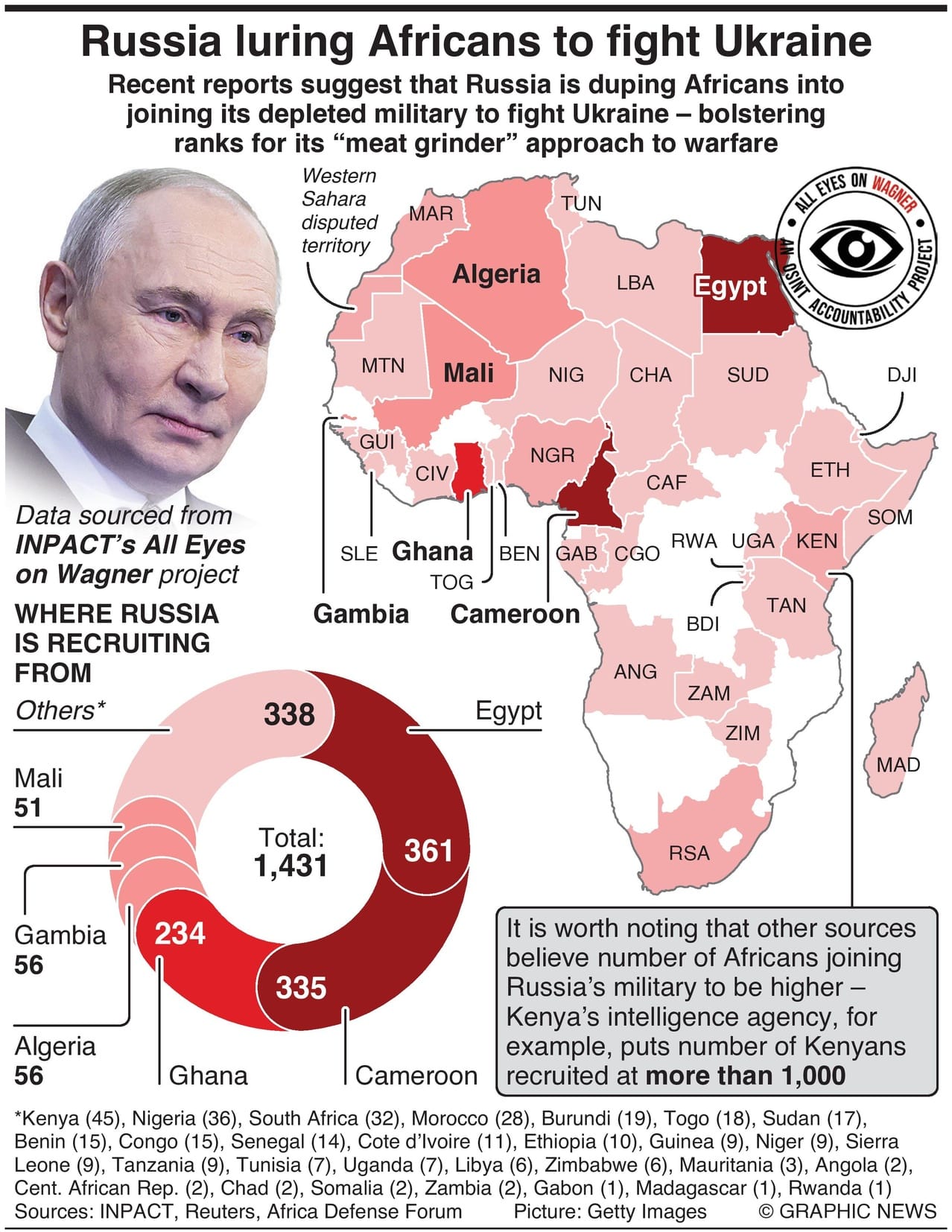

Russia Boosting Depleted Forces With African Recruits

Recent reports suggest that Russia is duping Africans into joining its depleted military to fight Ukraine – bolstering ranks for its “meat grinder” approach to warfare.

Kenya’s Cabinet Secretary for Foreign Affairs, Musalia Mudavadi, has been visiting Russia this week amid growing pressure at home to urge Moscow to stop recruiting Kenyan citizens into its military.

Recent reports have highlighted the extent of efforts to enlist Africans into Russia’s depleted forces, often through intermediaries who advertise well-paid civilian jobs as a lure.

Ukraine claims that more than 1,700 Africans are currently fighting on Russia’s side.

A report by Kenya’s intelligence agency suggests that over 1,000 Kenyans alone have been recruited.

Meanwhile, INPACT, a Swiss-based investigative organization, has verified several recruitment lists it obtained. Their data concludes that 1,431 individuals from across Africa have been taken by Russia (although it records only 45 Kenyans).

Regardless of the exact figures, analysts believe the real number is likely far higher, forming part of a deliberate strategy to bolster Russia’s ranks with waves of soldiers for what has been described as its “meat grinder” approach to warfare.

Russian authorities, however, deny any illegal recruitment of African citizens to fight in Ukraine.

Catch up on today’s highlights, handpicked by our News Editor at TIPP Insights.

1. Iran Security Chief Killed In Airstrike, Says Israel

2. What Is Delaying Trump’s Coalition To Secure The Strait Of Hormuz?

3. Telegram Outages Surge As Kremlin Tightens Internet Control

4. Iran In Talks With FIFA Over Venue Shift From U.S. To Mexico Amid War

5. Iran War: How Oil Disruptions Are Impacting The Global Economy

6. Top U.S. Counterterror Official Resigns Over Iran War

7. China And Vietnam Expand Naval Drills With Live Fire

8. Treasury Yields Rise As Oil Surge And Iran Tensions Rattle Markets

9. Federal Judge Stops Kennedy’s CDC Panel Shake-Up

10. Amazon Pushes Faster Deliveries With New 1-Hour Service

11. U.S. Travel Hit By Rising TSA Absences During Shutdown

12. Trump Plans White House Event Ahead Of Biofuels Decision

13. New Democratic Proposal Aims To Break Shutdown Deadlock

14. Trump Comments Add To Tensions As Cuba Loses Power

editor-tippinsights@technometrica.com