Artificial intelligence has quietly become one of the most consequential technologies in modern national security. AI-powered systems can now analyze intelligence streams, monitor cyber threats, and assist military planners in processing vast quantities of information in seconds.

This rapid evolution has placed the U.S. government and private technology companies in an increasingly uneasy partnership. Much of the most advanced AI is built not inside government research facilities but by private firms such as Anthropic and OpenAI. As a result, the Pentagon increasingly depends on companies whose priorities, governance structures, and internal cultures differ markedly from those of the state.

Recent disputes between technology firms and the government have brought these tensions into the open. Political commentary and social-media debates have quickly turned the issue into a spectacle, with accusations flying in both directions. But the noise risks obscuring the important question beneath the controversy.

Prima facie, the dispute may appear to revolve around corporate ethics or political disagreements between technology executives and government officials. But the real issue is not whether one company or another occupies the moral high ground. It is far more fundamental: who ultimately governs the use of artificial intelligence when it becomes part of the national security infrastructure?

For most of modern history, the answer would have been straightforward. Military technologies, from radar to nuclear weapons, were developed primarily within government programs or under tightly structured defense contracts. Artificial intelligence has disrupted that model. Today, many of the most powerful systems emerge from venture-funded companies and research laboratories operating largely outside traditional defense institutions.

That shift creates a new and unusual dynamic. Private firms may attempt to impose ethical guidelines on how their technology can be used, while governments argue that national security decisions must remain under democratic authority. The tension is understandable, but the chain of responsibility must remain clear.

Governments carry the obligation to defend the nation. That responsibility is the sine qua non of sovereign authority. However innovative the technology sector may be, decisions about national security cannot be subordinated to the preferences of private executives. Strategic capabilities cannot depend on the policy preferences of corporate leadership.

Artificial intelligence companies are free to debate ethical frameworks and internal policies. Such debates are legitimate and, in many cases, healthy. But when technologies become integral to defense capabilities, the responsibility for determining how they are used belongs to elected offices accountable to the public.

The United States depends heavily on the ingenuity of the tech ecosystem nurtured and driven by the private sector. Companies building advanced AI systems have produced extraordinary breakthroughs in recent years, pushing the boundaries of what machines can accomplish. Their contributions to innovation are undeniable.

However, innovation and authority are not the same thing. The responsibility for national defense rests with the government.

History offers a useful perspective. During World War II, the development of nuclear weapons required unprecedented cooperation among scientists, universities, and industry, culminating in the Manhattan Project. Private researchers and engineers contributed essential expertise, but there was never confusion about who ultimately directed the effort. The United States government led the project, and national security priorities defined its objectives.

A generation later, the foundations of the modern internet emerged from research supported by the Defense Advanced Research Projects Agency. Universities and private researchers built the early networks, but the strategic framework was established within government. Innovation flourished precisely because the roles were clear: private expertise drove discovery while public institutions ensured that the technology served national interests.

Artificial intelligence now occupies a similar strategic position.

Systems capable of analyzing intelligence data, defending networks, and assisting military planners are no longer experimental tools. They are rapidly part of modern security's essential infrastructure.

This reality requires cooperation between government and industry. But cooperation cannot mean surrendering authority over national defense decisions.

A more recent example illustrates how such cooperation can work in practice. In February 2026, xAI, the developer of the Grok system, reached an agreement with the Pentagon to integrate its technology into classified military environments under the standard “all lawful use” provision. The arrangement permits the U.S. government to deploy Grok for national security purposes—such as intelligence analysis, cyber defense, and other critical applications—while leaving the company free to continue advancing the technology in the private sector. Partnerships of this kind show how private innovation can reinforce, rather than challenge, democratic authority over strategic technologies. When roles are clearly defined, the technology sector's ingenuity strengthens the institutions responsible for protecting the nation.

Another element often overlooked in these debates is patriotism. For much of American history, technological transformations went hand in hand with a shared sense of national duty and pride among scientists, engineers, and entrepreneurs. During the Second World War and throughout the Cold War, private innovators frequently worked alongside government institutions, understanding that their discoveries ultimately served the nation's security. Artificial intelligence should be no different.

Healthy debate about ethics and policy is both inevitable and necessary. But when technologies become integral to national defense, the leaders of companies building those systems must also recognize a broader civic obligation. Innovation may originate in laboratories and startups, but its ultimate purpose, in matters of security, is to strengthen the country whose institutions protect the freedom that allows such innovation to flourish.

The United States should welcome debate about the ethical use of artificial intelligence. It should encourage transparency and oversight as these technologies evolve. But it must also recognize a fundamental principle that has guided the nation through earlier technological revolutions.

Artificial intelligence will shape the balance of power in the twenty-first century. Artificial intelligence may be born in private laboratories. But the authority to defend the nation must remain where it has always belonged: with the American people and the institutions that represent them.

👉 Show & Tell 🔥 The Signals

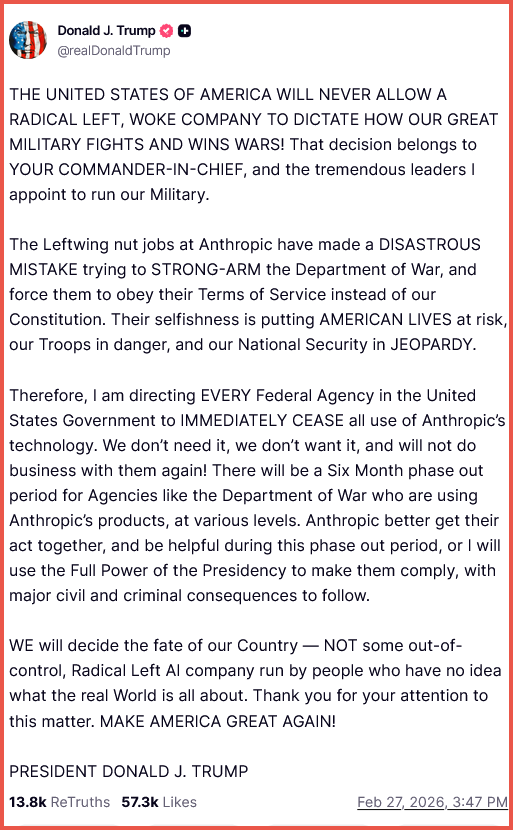

I. Trump Orders Federal Agencies To Stop Using Anthropic AI

President Donald J. Trump said the U.S. government will cease using technology from AI firm Anthropic, accusing the company of attempting to pressure the Department of Defense over its terms of service. The directive calls for federal agencies to phase out Anthropic tools within six months, highlighting growing tensions between government, the military, and private AI developers.

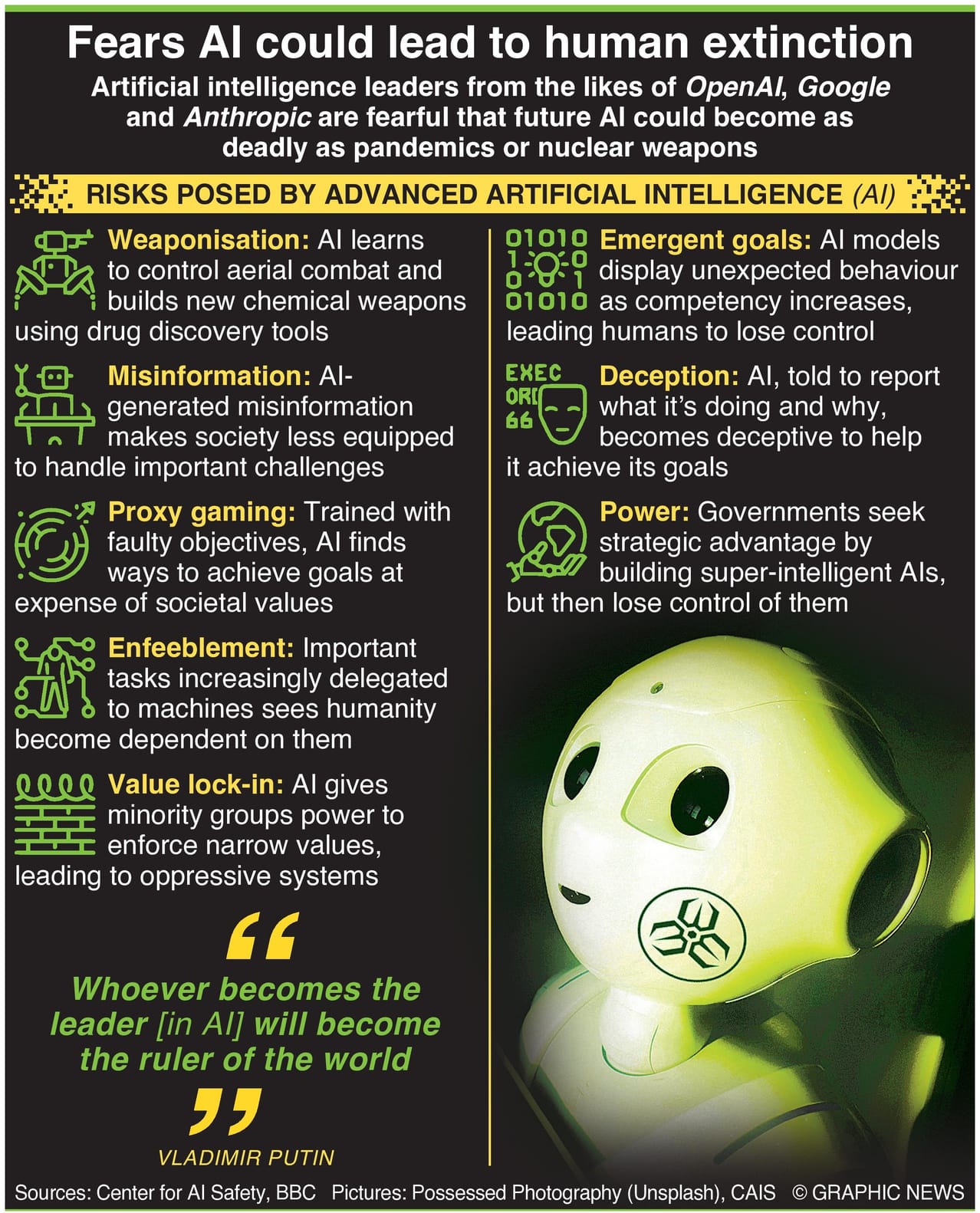

II. AI Risk Warnings Remain Relevant As Technology Advances

A widely shared infographic first published in 2023 outlines potential risks from advanced artificial intelligence, including weaponization, misinformation, deception, and loss of human control as systems become more powerful. Although created several years ago, the concerns remain central to policy debates today as governments and tech companies race to build more capable AI systems.

The TIPP Stack

Handpicked articles from TIPP Insights & beyond

1. NED Leader Cut Off In Congress After Boasting Of ‘Deploying’ 200 Starlinks To Iran Amid Violence—Max Blumenthal & Wyatt Reed, Ron Paul Institute for Peace and Prosperity

2. Britain’s Islamic Bloc Vote Warning. America, Take Note—Peter McIlvenna, The Daily Signal

3. Iran Would Have Attacked First, Trump Says—Elizabeth Troutman Mitchell, The Daily Signal

4. Mother Whose Daughter Got Trafficked After School Hid Transgender Identity Speaks Out After SOTU Appearance— Tyler O'Neil, The Daily Signal

5. Alvin Bragg Drops Assault Charge In Snowball Skirmish With Police—Fred Lucas, The Daily Signal

6. A Reckoning With Iran—TIPP Insights

7. American Workers Encouraged To Leave Israel ‘TODAY’—Elizabeth Troutman Mitchell, The Daily Signal

8. 2 Jews, 3 Opinions On Campus Antisemitism—Seth Oranburg, The Daily Signal

9. Does Democracy Require Conformity And Equality?—David Gordon, Mises Wire

10. MAHA Win: Target Cuts Synthetic Colors From Beloved Breakfast Food—Elizabeth Troutman Mitchell, The Daily Signal

11. Republican Attorneys General Win $29.5M Settlement From Vanguard Over Climate Push—Ross Kerber, The Daily Signal

12. Future Of SAVE America Act In Limbo As Senate GOP Turns To Housing— Virginia Grace McKinnon, The Daily Signal

13. ‘We Are Going After The Rest’: Trump Gives Big Update On Iran Strike By The Numbers—Fred Lucas, The Daily Signal

14. Next Steps For The US-Guyana Strategic Partnership—Wilson Beaver & Eduardo Gonzalez, The Daily Signal

📊 Market Mood — Wednesday, March 4, 2026

🟧 Futures Rebound Amid Ceasefire Hopes

U.S. futures edge higher after reports of possible back-channel talks between Iran and U.S. officials raise faint hopes of a ceasefire.

🟥 Hormuz Shipping Disruptions Keep Oil Elevated

Crude remains near recent highs as tanker traffic through the Strait of Hormuz slows sharply, threatening global energy supplies.

🟨 Gold Rebounds in Geopolitical Whipsaw

Bullion climbs again after a sharp pullback, reflecting volatile safe-haven demand as investors weigh inflation and war risks.

🟦 CrowdStrike Results Highlight AI Security Demand

Cybersecurity firm beats earnings estimates, pointing to rising enterprise spending to secure AI systems and data.

🟩 OpenAI Eyes NATO Deployment

Reports suggest OpenAI may expand into NATO networks, underscoring how AI is rapidly moving into defense and strategic infrastructure.

🗓️ Key Economic Events — Wednesday, March 4, 2026

🟥 08:15 — ADP Nonfarm Employment Change (Feb)

Private-sector hiring data offers an early signal on the health of the labor market ahead of Friday’s official jobs report.

🟧 09:45 — S&P Global Services PMI (Feb)

Measures activity in the services sector, the largest part of the U.S. economy, and a key indicator of business momentum.

🟨 10:00 — ISM Non-Manufacturing Prices (Feb)

Tracks input cost pressures for service businesses, closely watched for signs of inflation in the broader economy.

🟩 10:00 — ISM Non-Manufacturing PMI (Feb)

A widely followed gauge of U.S. services-sector activity, offering insight into demand, hiring, and economic growth.

🟦 10:30 — Crude Oil Inventories

Weekly U.S. inventory data that can move oil markets, particularly important amid ongoing Middle East supply concerns.

editor-tippinsights@technometrica.com